Data modeling is the basis for every data-driven decision in companies. It is a central element of digital transformation and determines how well data can be used, expanded, and analyzed. Whether in software development, the organization of a data warehouse, or AI projects—without a clean data structure, there can be no scalable solutions.

The cornerstone of any data strategy – understanding modern data modeling

What is data modeling?

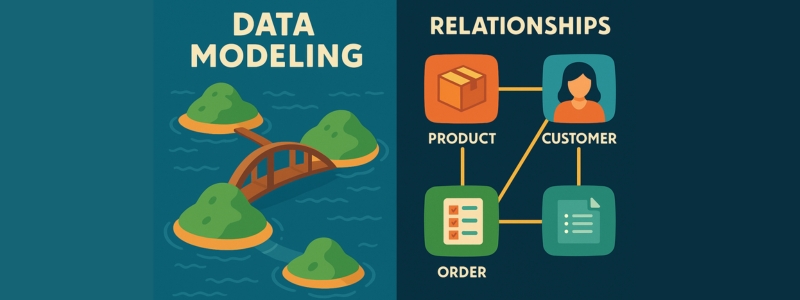

A data model can be compared to a blueprint.

Before an architect builds a house, they create a drawing: it shows where the walls are, how the rooms are connected, and where the electricity and water run. A data model works in exactly the same way: it defines what information is stored, how it is connected, and how it can be used.

Data modeling therefore means organizing real information into an understandable structure. This results in clear models—often in the form of diagrams or tables—that show how information is linked. This makes it easier to store, retrieve, and process data.

A good model forms the basis for all data handling. It makes complex structures understandable and converts them into standardized formats. Typical representations include entity-relationship diagrams or UML graphics. The goal is to create a logical structure that is equally understandable for specialist departments and developers.

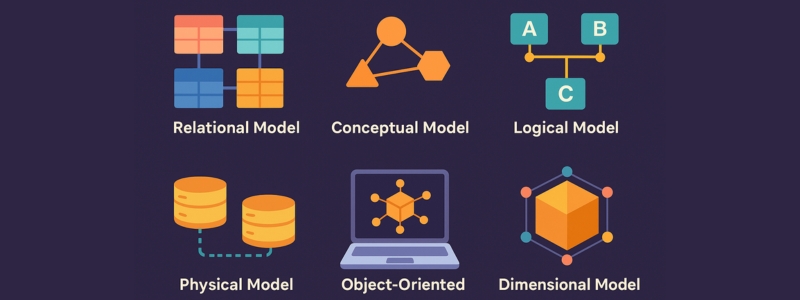

As the amount of data increases, so does the challenge of creating order. Terms such as relational data modeling, hierarchical or physical models show that there are many ways to structure data in a meaningful way. According to Wikipedia, the origins of these approaches date back to the early days of computer science – today they are more relevant than ever.

Why is data modeling important?

Whether for a new data warehouse, business intelligence projects, or when merging data from different sources, a well-designed data model improves data quality, prevents duplicate entries, and enables accurate analysis. Companies that model their data cleanly make better decisions—faster and more sustainably.

A good model reveals weaknesses early on, can be reused, and facilitates collaboration between departments. The value of clear data structures is particularly evident when switching to new systems or during company mergers: they often determine whether a project succeeds or fails.

AI meets data modeling – DataNaicer from uNaice

Many companies struggle with chaotic data sources, unstructured information, and high maintenance costs. DataNaicer from uNaice solves precisely this problem: it combines fixed rules with artificial intelligence and automatically creates structured product data – even with large, confusing amounts of data.

Instead of manually creating lots of individual templates, DataNaicer uses a flexible system: just a few templates are enough to automatically describe thousands of products – precisely tailored to the existing data structure. Integration takes place via API or webhook and can be integrated into systems such as a data warehouse.

Companies such as IBM also demonstrate that flexible modeling approaches are more important today than ever before. DataNaicer takes this flexibility to a new level.

From concept to structure – how data modeling helps companies move forward

Data models in everyday work: where they really help

One particularly important area of application for data modeling is data integration – i.e., combining information from different sources. Especially when a company uses many different systems, a uniform model helps to prepare all data in a comprehensible and compatible way.

A well-designed data model is not just a technical plan. It is the basis for all data-based processes in the company. It shows how information is connected and ensures a common language – whether in IT, marketing, or logistics.

Modeling techniques at a glance: From logical to physical

What types of data models are there?

There are three basic types of data modeling:

A good overview of these model types can be found in the Salesforce Data Modeling Guide.

Not just for IT: Data modeling affects the entire company

A data model is like a city map. Every department—from sales to accounting—can use it for orientation. Without a common model, it's easy to lose track of things: teams work at cross-purposes, duplicate data is created, and decisions are based on false premises.

Many believe that data modeling is only for IT teams. But in reality, it helps everyone—from marketing to product development to management. A clean model brings clarity to data, improves its quality, and makes it usable everywhere.

Practical example: How AI helps with data modeling

A manufacturer with over 10,000 products used uNaice's DataNaicer to automate its data modeling. The challenge: confusing CSV files with different attributes. The solution: a flexible model that intelligently structures all products and their properties. The result: 80% less effort in text generation – and significantly better data quality.

Data modeling techniques in detail – from relational models to object-oriented structures

Overview: What types of modeling are there?

Different data modeling techniques are used depending on the application and system environment. The relational model is particularly widespread. It structures data in tabular form, with each table representing an entity. Primary and foreign keys ensure clear links – ideal for structured data with fixed relationships.

In addition, there are three other central model types, which are distinguished according to their degree of abstraction:

A clear comparison of these models can be found in the article by Informatik Aktuell.

A Berlin-based software company implemented precisely these three stages in practice – from the initial sketch to the final system design. With the support of DataNaicer, the project was implemented efficiently. The result: 55% shorter development time for new features.

Object-oriented & dimensional – when which technology is appropriate

Object-oriented modeling is ideal for complex applications with many attributes. It originated in software development and is particularly flexible when requirements change.

Dimensional models, on the other hand, are optimized for analysis purposes. They structure data in such a way that quick evaluations—for example, in a data warehouse—are possible. They offer clear advantages for reporting, especially when dealing with large amounts of data.

Practical example: Optimization with dimensional modeling

An e-commerce company switched from a purely relational model to a combined, dimensional structure – with noticeable success. Report loading times improved by 70%, and maintenance costs fell significantly.

Structure and relationships – How modern data models work in practice

More than just tables: How to create a good data model

A data model is the blueprint for the structure of an information system. It defines how data is stored, linked, and processed. A well-designed model not only saves storage space, but also makes it easier to make changes and additions later on.

The important thing here is balance: the model must be clearly structured and at the same time practical to use – especially with large amounts of data. Visualization tools such as Toad Data Modeler help to clearly illustrate complex relationships.

A simple illustration: Imagine entities as islands in a lake—the relationships between them are bridges. Without these bridges, the data islands remain isolated. Only well-planned connections create a usable network.

Understanding relationships—the heart of any modeling

In relational databases, so-called relationships are crucial. They define how data records are connected to each other – for example, 1:1, 1:N, or n:M.

Example: A company manages products, customers, and orders. A product can appear in multiple orders. A customer can place many orders. Only when these relationships are clearly modeled does data remain consistent and analyzable.

Documentation tools ensure that these structures remain understandable in the long term – even with future adjustments.

Modeling in MySQL & Co.

Implementation takes place in systems such as MySQL, PostgreSQL, or Oracle. These databases offer functions for automatically checking data types, keys, and dependencies – particularly important for large amounts of data.

A good model speeds up queries, reduces errors, and makes systems more efficient overall.

Practical example: Scaling with a clean structure

A medium-sized e-commerce company needed to optimize its MySQL database. The starting point: unclear relationship types and duplicate information.

Solution: First, the existing model was analyzed using reverse engineering, then rebuilt using object-oriented modeling. The result: 60% better performance—and 40% fewer errors at checkout.

Data modeling in everyday life – challenges and best practices

Between theory and practice: where companies fail

Despite clear concepts for data modeling, many companies have difficulty implementing them. According to a survey by Gartner (2023), only about 30% of companies achieve consistent data quality in their data models. Common reasons include a lack of process-oriented structures, unclear data sources, or inadequate documentation.

The relationship factor: Why “relationship” is at the heart of modeling

In practice, clearly defining relationships is crucial for the functionality of a data model. Incorrectly defined relationships lead to redundancies, data loss, or inefficient queries. This is particularly critical in relational databases, where faulty relationships are often the main cause of subsequent system problems.

Case study: How a manufacturer optimized its data architecture with the help of AI

A medium-sized furniture manufacturer from Germany was faced with the challenge of transferring over 15,000 product data records to a new PIM system. Using uNaice's DataNaicer, unstructured CSV files were converted into a standardized data model—including automated key recognition, relationship definition, and documentation. The result: a 40% reduction in time-to-market, improved data management, and significantly higher data quality.

Best practices for sustainable data modeling

Designing scalable data modeling – the right tool for success

When data models reach their limits

Data modeling often appears clear and logical on paper – but in practice, many companies quickly reach their limits. Unstructured data volumes, inconsistent formats, and manual maintenance processes lead to delays and quality issues. When thousands of products, multiple markets, and different data sources need to be brought together, it becomes clear that scalability is key. Especially with thousands or even millions of products, each with dozens of attributes, traditional tools or manual modeling processes quickly reach their limits. The structure, presentation, and processing of such data volumes becomes a challenge—especially when it comes to consistency, understanding, and reusability.

The answer for large amounts of data: DataNaicer

This is exactly where uNaice's DataNaicer comes in. The SaaS solution combines rule-based modeling logic with artificial intelligence and automatically processes both structured and unstructured data into modelable formats. This not only saves a tremendous amount of time, but also significantly increases data quality.

What makes the difference?

Case study: From CSV chaos to structured data architecture

A manufacturer with over 50,000 products had its data records only as loose CSV files without defined keys or relationships. By using DataNaicer, a consistent data model was built – including conceptual structure, documentation, and automated management. The result: automated text generation for all product pages in four languages – in less than three weeks.

Also for smaller teams: No expert knowledge required

Another advantage: DataNaicer is not a classic developer tool. It was designed so that even non-technical teams can work with it. The interface is intuitive and easy to use—and if you like, uNaice can provide you with managed service support. This means that data modeling is no longer a bottleneck, but a real growth driver.

What types of data modeling are there?

Diversity in modeling: From classic to AI-supported

Data modeling can be divided into different types, depending on the degree of abstraction and the objective. Classically, a distinction is made between:

In addition, there are object-oriented data models, relational data modeling, dimensional models for OLAP analyses, and hybrid approaches that combine different structures.

For many companies, free data modeling tools are also a good place to start—these often offer graphical interfaces, simple notation, and help with initial understanding.

How is data modeling evolving?

Data modeling is currently facing a paradigm shift: away from rigid tables and toward flexible, semantic models that can automatically adapt to data changes. Techniques such as reverse engineering, AI-based model adaptation, and dynamic templates (as in DataNaicer) are setting new standards.

A forward-looking aspect: data architecture as a service. Companies no longer want to buy tools—they want solutions that solve their data problems. That's exactly what uNaice offers with DataNaicer as a managed service.

Data organization and data warehousing – why data modeling is strategically important

Data modeling is not just a technical tool – it is a strategic lever. Smart modeling today lays the foundation for flexible data architectures, faster decision-making processes, and future-proof scalability tomorrow. In a well-structured organization, it is the key to the efficient use and long-term storage of large amounts of data.

How data modeling improves the organization in the long term

A well-thought-out logical model forms the basis for later physical implementation in systems such as a data warehouse. Especially with growing data volumes, it is important that the maximum amount of information is not only stored but also made usable – for analysis, reporting, and operational processes.

The use of standardized modeling techniques not only creates efficiency but also reduces the susceptibility to errors. A clear definition of relationships, data types, and business logic in the model prevents later redundancies and data inconsistencies.

One aspect that is often underestimated is abstraction: only those who are able to translate complex issues into simple structures can maintain and develop them in the long term. This is precisely where the methodical approach comes in – from considering the technical requirements to the final implementation and designing suitable models.

Rule-based procedures—i.e., the application of predefined rules for specific data patterns—play a decisive role here. They help to create a clear data structure that is both scalable and maintainable.

Example: A company that operates internationally needs a data warehouse that takes both local and global requirements into account. This requires modeling that harmonizes different sources, languages, and business processes—and our DataNaicer provides the ideal foundation for precisely this.

Conclusion: Data modeling as the basis for modern data strategies

Data modeling is much more than a technical step in data processing—it plays an important role as a strategic discipline that determines the definition, structure, and success of entire data landscapes. Those who model their data in a targeted manner create clear processes, better analyses, and a basis for sound business decisions.

A well-designed data model follows clearly defined rules – from abstraction and the connection of entities to ensuring logical consistency. At the same time, data modeling remains a dynamic field that is constantly evolving with technologies such as AI, automated tools, and new approaches such as DataNaicer.

Especially for companies that want to integrate large amounts of data from different sources, it is crucial to set up a scalable model at an early stage – and thus create a sustainable data architecture. Those who work with the right tools today will not only save resources tomorrow, but also secure long-term competitive advantages.

🚀 Say goodbye to data chaos! With DataNaicer, you can model large amounts of data automatically, efficiently, and accurately.