Contents

- • Productivity Increase

- • Automating Simple Tasks

- • Viral Content for Social Media

- • Content Ideas

- • Goal-oriented Articles and Blog Posts

- • Coding

- • Simplifying Complex Concepts

- • Outdated Training Dataset

- • Research Phase and False Information

- • The Hubris

- • Problematic Service

- • Systematic Errors

Allow me to introduce myself: my name is ChatGPT

GPT-4: A Star is born?

Yes, the AI language model GPT-4 has arrived! And once again, the entire internet is buzzing with excitement. It appears that the new multimodal offspring from OpenAI has further expanded its creative writing capabilities and is now also able to better understand longer contexts and visual elements. For the time being, GPT-4 will only be available in the paid mode GPT-4 Plus and as an API for developers to build their own applications, with a waitlist for API access.

GPT-4 vs ChatGPT

According to OpenAI CEO Sam Altman, GPT-4 differs only very slightly from GPT-3.5 in its conversational ability – the AI model behind ChatGPT. The architecture is unlikely to have been fundamentally changed, and the model will probably continue to exhibit a humanoid, but by no means human-equivalent, neural network.

The language processing model ChatGPT stands at the top of the GPT-3 models and was created by the non-profit organization OpenAI. Since its launch, it has caused quite a stir both online and offline. It creates texts, can summarize, translate, or simplify them, using the deep learning method. In this article, we will show to what extent this AI is a true language genius, and why it is nevertheless not the right tool for content automation in all use cases.

OpenAI

The non-profit organization OpenAI was founded in 2015 by Elon Musk, Sam Altman, and other investors in San Francisco. The ambitious team at OpenAI sees itself as a blue-sky research lab exploring the possibilities of artificial intelligence and makes its results publicly available. The declared goal of OpenAI is the creation of an increasingly powerful, human-like intelligence.

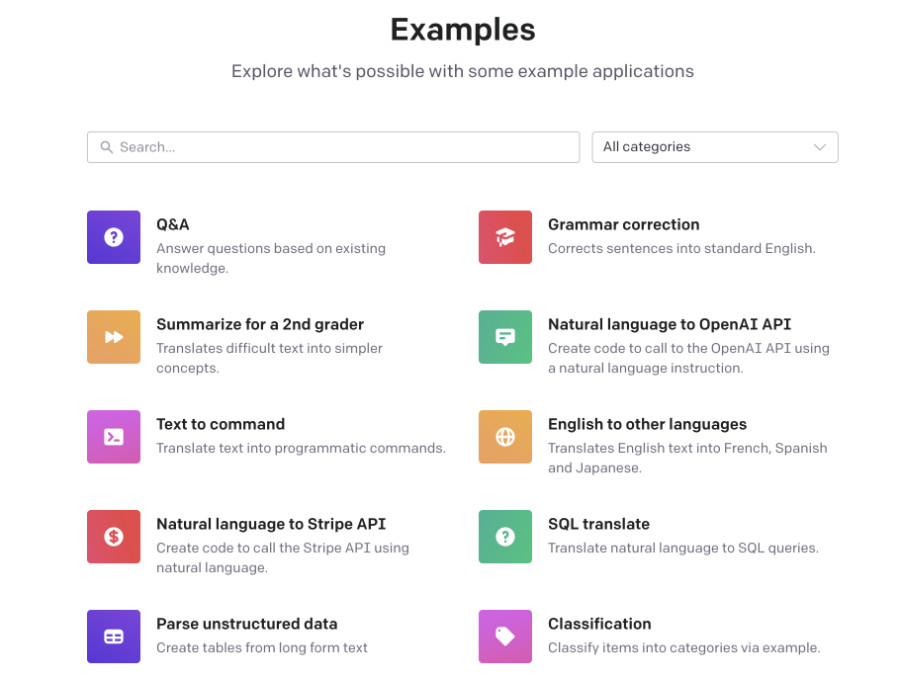

In addition to GPT-1, GPT-2, GPT-3, and GPT-4, OpenAI has produced several AI products over the years:

OpenAI Gym

Toolkit for developing and comparing reinforcement learning algorithms

OpenAI Universe

Software platform for measuring and training the general intelligence of an AI

OpenAI Five

A system that plays the video game Dota 2 against itself

The GPT Family

Generative Pre-Trained Transformer (GPT) is a family of language models that are generally trained on a large corpus of text data to produce human-like text. The 'pre-training' in the name refers to the initial training process on a large text corpus, during which the model learns to predict the next word in a passage.

The introduction of the language model has definitively ushered the world of artificial intelligence into a new era, as this language transformer is a machine learning system whose algorithm is not trained for a specific task.

GPT-1

In 2018, OpenAI developed a general, task-agnostic model (GPT-1), whose generative pre-training was performed using a book corpus. The model used unsupervised and semi-supervised learning. The original GPT study showed how a generative language model is capable of acquiring world knowledge and processing long-range dependencies.

Semi-supervised learning involves three components:

- Unsupervised Language Modeling ('pre-training'): A standard language model was used for unsupervised learning.

- Supervised Fine-Tuning: Maximizing the likelihood of a specific label given certain features.

- Task-specific Input Transformations: Converting inputs into ordered sequences.

GPT-1 outperformed specifically trained, supervised state-of-the-art models in 9 out of 12 compared tasks. Another important achievement was its zero-shot learning capability.

GPT-2

In 2019, GPT-2 was released, though the full version was not immediately made available due to concerns about potential misuse. The WebText corpus contains over 8 million documents totaling 40 gigabytes of text. The most important change was a larger dataset with 1.5 billion parameters – 10 times more than GPT-1 with 117 million parameters.

GPT-2 demonstrated that training on larger datasets with more parameters significantly improves a language model's ability to understand tasks. This laid the foundation for GPT-3 and ChatGPT.

GPT-3

OpenAI developed the GPT-3 model with 175 billion parameters, 100 times more than GPT-2. Thanks to its large capacity, GPT-3 can write articles that are difficult to distinguish from human-written ones. It can also spontaneously perform tasks for which it was never explicitly trained.

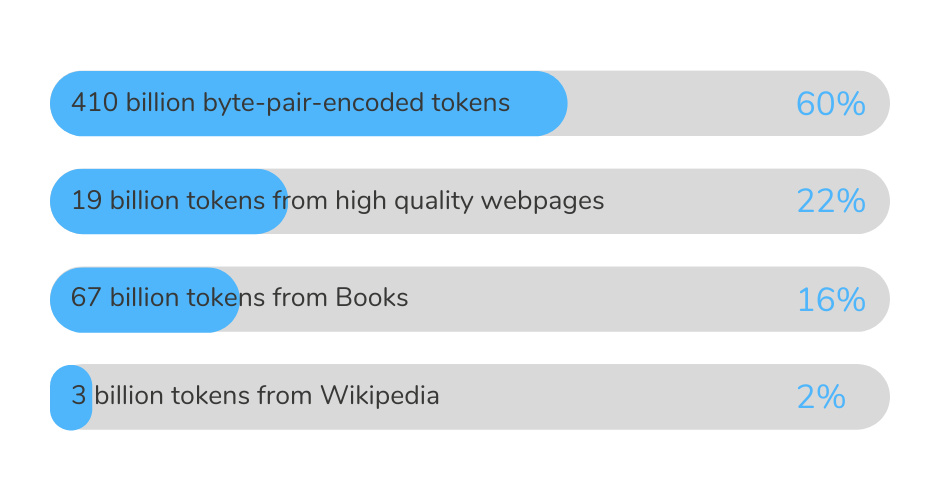

ChatGPT is the pinnacle of the GPT-3 family. It is a chatbot that was launched in November 2022. It was pre-trained on a vast and diverse text corpus with hundreds of billions of words:

With ChatGPT, we are already in the 3rd generation of GPT models. This means that ChatGPT is not truly a revolution in text automation.

GPT-4

GPT-4 was trained with 100 trillion parameters, compared to 175 billion for GPT-3. Alignment is an improved coordination between user prompts and text output. However, OpenAI prefers to focus on optimizing GPT models rather than scaling them indefinitely.

Text output with GPT-4 is not scalable. The model will also continue to 'hallucinate.' The most important difference is the ability to process images as input. Like GPT-3, GPT-4 was only trained with data up to 2021.

Learning Methods

The various GPT models use different learning methods:

Supervised Machine Learning

All data in the dataset is labeled, and algorithms learn to predict output based on input data. Labeled data is data with prior understanding, e.g., an image labeled 'cat' or 'dog'.

Unsupervised Machine Learning

All data is unlabeled. Algorithms learn to recognize inherent structures. Unlabeled data is data in its raw form.

Semi-supervised Learning

Some data is labeled, most is not. A mix of supervised and unsupervised methods. A small set of labeled data and a large set of unlabeled data are used.

Reinforcement Machine Learning

The algorithm discovers through trial and error which actions yield the most reward. The goal is to determine ideal behavior in a given context.

'Wrong' Learning and Toxic Language

Training data occasionally contains toxic language. OpenAI has taken various measures to reduce the amount of unwanted content. Compared to GPT-1 and 2, GPT-3 generates less toxic content thanks to these measures.

As early as 2021, OpenAI announced that sufficient safety measures had been taken to allow unrestricted access to its API.

What learning method does ChatGPT use?

ChatGPT uses both supervised learning and Reinforcement Learning. Both approaches employ human trainers to improve model performance using 'reward models' and a Proximal Policy Optimization algorithm (PPO).

ChatGPT is trained to predict the next token based on preceding tokens.

What are Tokens?

Tokens are the result of a process called tokenization. It consists of delimiting and possibly classifying sections of a string of input characters. In GPT models, language understanding was improved through a generative pre-training process on a diverse corpus as part of natural language processing (NLP).

Conclusion

The GPT family from OpenAI represents a remarkable advancement in artificial intelligence and NLG technologies. ChatGPT in particular offers many possibilities for text generation and human-like communication. In the following articles, we'll dive deeper into the subject.

ChatGPT in the Age of GPT-4 – The Real Capabilities of a ChatBot

After exploring the origin story and learning methods in the previous section, we will now examine the real capabilities of ChatGPT.

What can ChatGPT do?

ChatGPT is a sub-model of GPT-3. It is based on a transformer architecture and is trained in an unsupervised manner by predicting the next token in any sequence of tokens.

If you strip away the hype, ChatGPT is essentially a very sophisticated chatbot. Unlike previous language models, GPTs from version GPT-2 onwards are multitask-capable for the first time.

ChatGPT particularly excels through the following rational capabilities:

It is also an excellent storyteller:

Productivity Increase

Overall, ChatGPT offers some amazing capabilities in terms of NLP and can significantly help boost your productivity. Instead of learning a complex system of codes, you can ask ChatGPT in your own words.

Automating Simple Tasks

Basic applications include handling routine tasks. It can help organize your schedule, plan meetings, answer messages, and remind you of deadlines.

Creating Viral Content for Social Media

ChatGPT can create social media posts. Because the chatbot was trained on massive amounts of Twitter data, it knows the best formulations for tweets.

Content Ideas

ChatGPT is ideal for new content ideas. Content creators constantly need new ideas for their clients – ChatGPT conducts impressive brainstorming sessions.

Example:

Prompt: "Develop a plot for a romance novel featuring a chef who always has the hiccups."

"The story revolves around Emma, a talented chef at an elegant restaurant. Emma has a little secret – she regularly gets the hiccups at the most inappropriate moments. One night, a handsome guest named Alex walks in..."

A bit cheesy perhaps, but overall not bad at all, right?

Goal-oriented Articles and Blog Posts

The more precisely you formulate your instructions, the higher the probability that the chatbot will create an engaging text with call-to-actions.

Coding

ChatGPT is truly revolutionary here. It can be used as a programming assistant, detect errors, and even create more complex code like a complete WordPress plugin.

Simplifying Complex Concepts

ChatGPT explains complicated concepts, processes, and phenomena in an easily understandable way – while remaining charming.

What can't ChatGPT do?

Outdated Training Dataset

The training corpus has no direct access to the internet. ChatGPT is limited to 2021. It will not provide accurate answers to questions requiring more recent information.

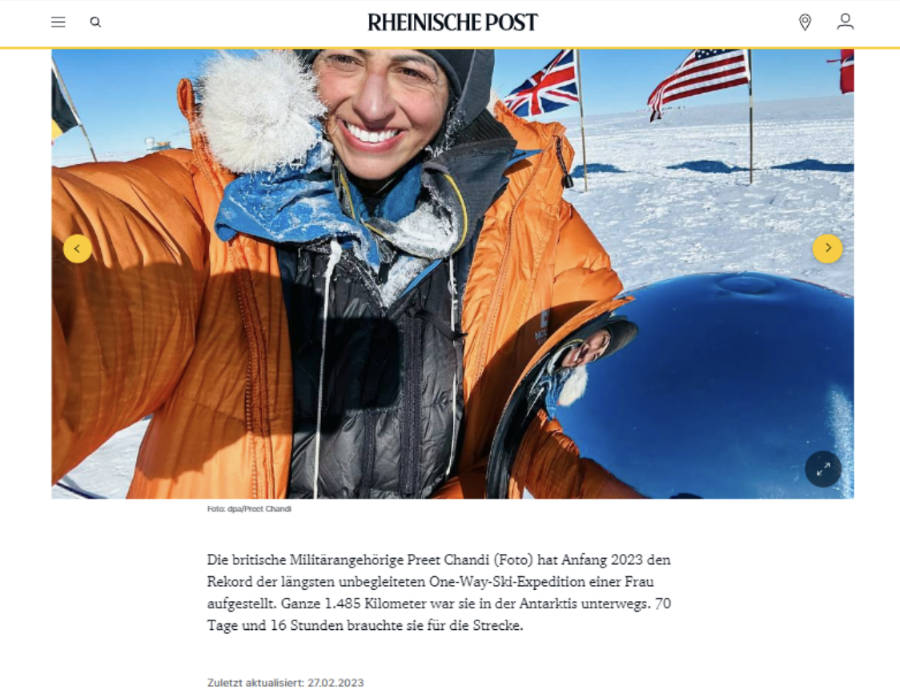

Research Phase and False Information

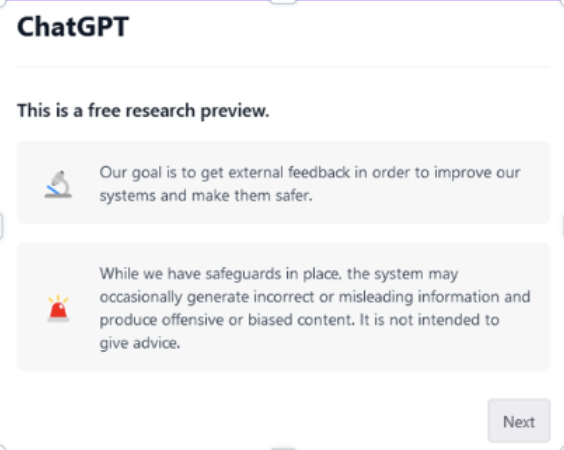

The chatbot is still in a research phase. Plausible-sounding but incorrect answers may be given.

The Hubris

ChatGPT simply cannot admit it doesn't know something. It is a probabilistic system – whenever a gap arises, it fills it with the next most likely token. We call this 'hallucinating' or 'confabulating.'

Problematic Service

The service was initially provided free of charge. Due to overload, bottlenecks frequently occur. Additionally, ChatGPT works best in English.

Systematic Errors

ChatGPT often provides illogical answers to mathematical operations and simple puzzles. Critics have pointed out that the program adopts biases from the training data.

Although various filter functions have been introduced, toxic language remains a problematic aspect.

Ethical Concerns

ChatGPT is certainly a remarkable technology. However, it also poses dangers. The question of sustainability arises: Operating ChatGPT costs about 3 million USD per day. The pre-training of GPT-3 alone left a carbon footprint equivalent to driving a car to the moon and back.

OpenAI employed outsourced Kenyan workers to label toxic content. The staff received very low pay and found the toxic content to be genuine 'torture.'

Conclusion

Although ChatGPT opens many new possibilities, it is important to recognize the limits of this heuristic technology. A Text Robot represents a more reliable, deterministic rule-based system. Unlike ChatGPT, texts will never be fabricated or incorrect.

GPT-4 & ChatGPT vs. Text Robot: Which language model is better suited for which use cases?

After covering the origin story and real capabilities in the first two parts, we now turn to the various application areas.

GPT-4 and ChatGPT

OpenAI's GPT-4 and ChatGPT are both customizable NLP models that use machine learning to provide natural-sounding responses. GPT-4 generates various text types, while ChatGPT is specifically designed for conversations.

GPT-4, ChatGPT, Text Robot – Where are the differences?

All three are NLG technologies that generate texts in natural language. GPT-4 is a probabilistic language model. A Text Robot, on the other hand, works deterministically (data-to-text) based on a specific, structured dataset.

What is structured data?

Attributes that can be cleanly displayed in table form: product data from a PIM system, statistics from a football match, or data about a hotel location.

Users always retain total control over the output. Texts are always formally and factually correct. They can be personalized and scaled.

Unique Selling Point: Languages

The Text Robot generates texts in up to 110 languages – not just translated, but localized at native-speaker level. GPT models, in contrast, create individual texts in individual languages.

Scalability

The Text Robot can create hundreds of texts in just seconds. ChatGPT and GPT-4 can only generate individual texts. Multilingual support is only available to a limited extent.

For which use cases are GPT models suitable?

GPT models are ideal for ideation or as a basis for blog posts. Data-to-text software is best suited for companies with high demand for variable text content.

Text Robot

Data-to-text is suitable for e-commerce, banking, finance, pharmaceutical industry, media, and publishers.

This is not magic. This is creative, rule-based and mapping-based programming!

ChatGPT and GPT-4

GPT tools help with brainstorming and are a formidable source of inspiration. Whenever new, brilliant ideas are needed, these language models are perfect.

What requirements does your text project have?

→ Text Robot, if:

- ✓ update lots of content with a mouse click

- ✓ control over content quality is important

- ✓ personalize texts, e.g., in salutations

- ✓ texts simultaneously in multiple languages

- ✓ no errors or false statements

- ✓ no duplicate content

- ✓ ethically questionable statements = no-go

- ✓ SEO-optimized texts needed

- ✓ consistent tone and style

→ ChatGPT / GPT-4, if:

- ✓ storytelling commissioned

- ✓ writing blog posts

- ✓ social media content

- ✓ chatting or managing dialogues

- ✓ website templates needed

- ✓ code needed

- ✓ no data context available

- ✓ individual texts quickly and cost-effectively

- ✓ use images as prompts

The Text Robot typically applies to these industries:

Multimodality to Expand Creative Writing

GPT-4 remains a pure language model but now also understands images, videos, and even music. Even speech-to-text is possible with it.

Text to Videos with the Text Robot

Customers who produce thousands of product descriptions via API calls can also produce thousands of product videos. These consist of images with sound, voice, and bullet-point descriptions. The scaling effect is immense.

Conclusion

GPT-4 and ChatGPT definitely write good, humanoid texts. However, it is important to be aware of what kind of text you want. GPT models produce the most probable output. Since they don't know how the world works, they can neither understand nor evaluate the result. Are you sure you can be satisfied with that?