Why companies should not do without data mapping

Data mapping plays a central role in today's data processing. Anyone who merges data from different sources must map it in a structured manner – otherwise there is a risk of data loss, duplicates, or incorrect analyses. Data mapping is therefore a crucial step in data integration, in any data migration, and in building a data warehouse.

Companies that rely on consistent and transparent data structures not only achieve improved data quality, but also lay the foundation for automation, analysis, and informed decision-making. This article explains exactly what data mapping is, how to implement it successfully, and which tools facilitate automation.

Definition and significance of data mapping

Classification in computer science and business practice

Data mapping describes the targeted assignment of data fields from a data source to the corresponding fields in the target system—for example, when transferring data to new software, a CRM system, or a data warehouse. It is the first step in data transformation, often involving conversions, standardizations, and adjustments.

These assignments are usually described in the form of mapping rules, which document how data from a source is transformed, moved, or deleted. This creates a clear structure that serves as the technical and organizational basis for successful data migration.

For many companies, mapping is not optional, but mandatory—whether it's for setting up new systems, integrating external data, or improving internal data quality. It ensures a consistent view of information, increases efficiency, reduces manual effort, and supports compliance requirements.

Why mapping is crucial

If you don't assign data correctly, you risk making the wrong decisions—or, in the worst case, data protection problems and system failures.

Why data mapping is crucial for businesses

Data integration without mapping? A risk for the entire organization

Many companies now rely on digital processes—whether in sales, accounting, or customer management. But without clear data mapping, the integration of these systems remains piecemeal. When data from CRM, ERP, or shop systems is merged, data mapping is indispensable.

This is because different data formats, a lack of standards, or unsynchronized data types make data migration complex. Without precise mapping rules, chaos ensues—with negative consequences for data quality, consistency, and ultimately decision-making within the company.

Data mapping ensures order here: it makes it clear where the data comes from, how it should be interpreted, and where it belongs in the target system. For the entire organization, this is the basis for transparency, security, and optimal use of all information resources.

Data mapping as the key to successful data migration

Successful data migration depends crucially on the quality of the mapping. If source and target fields are not assigned accurately, there is a risk of:

Good mapping not only documents the technical path, but also describes the purpose of each transformation—for example, when transferring data to a data warehouse or connecting new SaaS solutions.

Tip: Using a central mapping repository significantly reduces the manual effort required for future system changes or data modifications.

Improved data quality through structured data processing

Data quality is not a matter of chance, but of structure. Through well-designed data mapping, you can:

One key effect: transparency. Companies know where the data comes from, who uses it, and how it moves through the systems. This clarity is crucial—especially for real-time evaluations or when connecting external data sources.

A well-founded implementation of mapping processes not only improves operational performance, but also the strategic orientation of data management.

Relevant content tip

If you want to delve deeper into data structure automation, you can find more detailed information on data modeling and automation here.

Also worth reading: Creating an online database – explained step by step

Implementation in practice: How can data mapping be successfully implemented step by step?

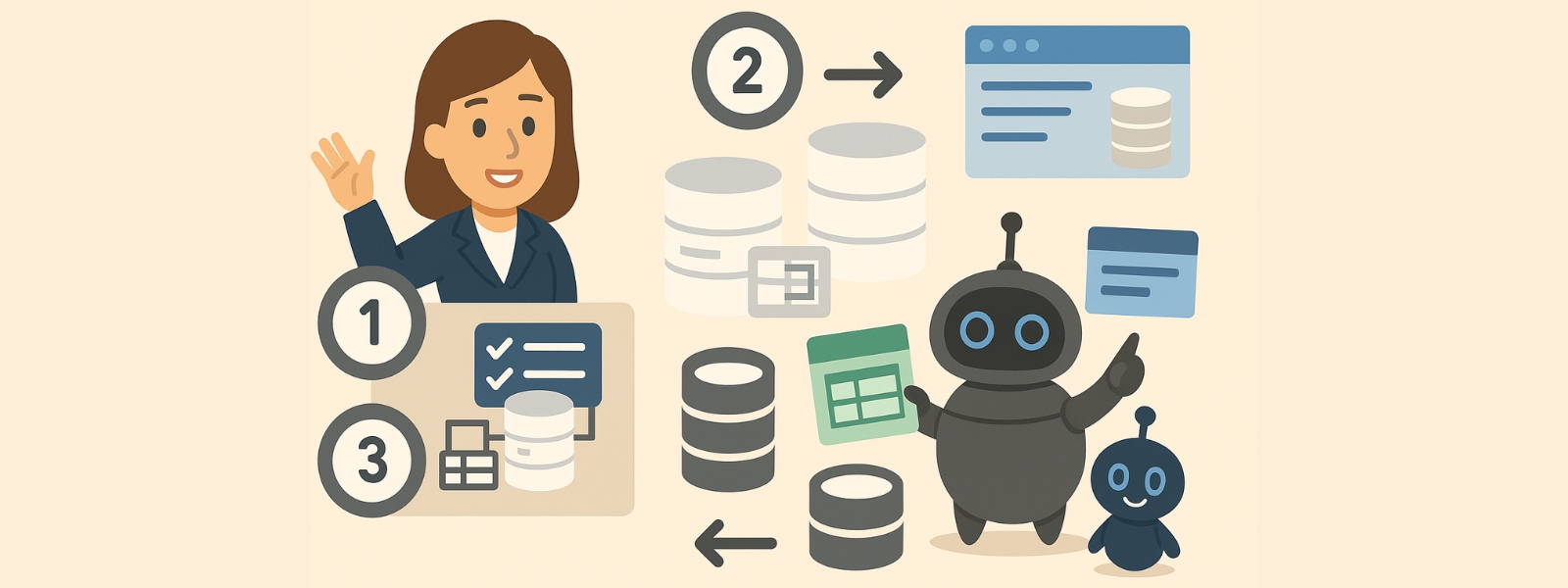

From definition to validation: an overview of the implementation process

Implementing a successful data mapping process requires more than just technical expertise. It requires a clear structure, strategic definition of mappings, and the use of appropriate tools. Many companies underestimate the complexity that arises from the multitude of systems, data formats, and target definitions. A well-thought-out implementation is therefore crucial.

Here is a proven procedure:

Step 1: Define source and target systems

First, the relevant data sources and target systems are identified. Every relevant data model should be considered—from CRM to databases to data warehouses.

A clear definition reduces subsequent errors during processing and validation.

Step 2: Analyze data fields and create mapping rules

Now it's time for the actual data mapping—i.e., creating mapping rules. This involves not only 1:1 mappings, but also:

This creates a documented set of rules that can be easily adapted with each update—a key advantage for maintenance and long-term optimization.

🔍 Tip: Talend offers a good overview of graphical mapping tools and automated processes—ideal for reducing manual effort.

Step 3: Validation, testing, and live operation

Before data goes live, it must be tested, validated, and approved. This includes:

Only after successful testing does the rollout into real-time operation take place – ideally with monitoring functions, logging, and automated documentation.

Tools and resources for optimization

As data complexity increases, it is particularly worthwhile to use specialized tools, such as:

You can find more information on this in the comparison of content management systems with automation – particularly helpful for smaller businesses.

Data mapping in the data warehouse: creating structure, controlling data flows

Why data mapping is essential in the warehouse

A data warehouse is the backbone of data-driven decisions. This is where information from a wide variety of source systems—CRM, ERP, e-commerce, marketing platforms, or external APIs—converges. For this data integration to work, you need well-thought-out data mapping that structures and secures the entire data movement.

Because in the warehouse, the rule is: only data that is clearly assigned can later be used in reports, dashboards, and analyses. Unclear formats, duplicate data records, or missing conversions lead to unusable results—with direct consequences for decisions and compliance.

Data mapping at the heart of every ETL process

Mapping plays a central role in the ETL (Extract – Transform – Load) process:

In transformation in particular, the focus is on data types, formats, recoding, and the correct assignment of all fields—for example, when addresses are standardized or number formats are adjusted.

InsightSoftware provides a more in-depth explanation of the mapping role in ETL in this technical article:

What is Data Mapping – InsightSoftware

Mapping in the data warehouse: typical challenges

When implementing mappings in the warehouse, companies encounter numerous challenges:

To master this complexity, structured procedures and powerful tools are needed that can react quickly to changes without having to rebuild the entire pipeline.

Documentation and maintenance: a must in data management

A key problem for many organizations is the lack of or incomplete documentation of mapping processes. Especially when there are personnel changes, new tools, or system updates, it is crucial to clearly document the rules for data flows in a comprehensible manner.

Recommendation:

This not only increases transparency, but also ensures the long-term performance of the entire data management system.

Practical tip for small businesses

Even if a warehouse sounds like a major project, even small businesses benefit enormously from a clear mapping structure—for example, when connecting shop systems or migrating to a new CRM. Once you have clarified the assignment, you can handle future data migrations much faster and more securely.

The article on database creation for SMEs provides additional inspiration—also interesting in conjunction with a warehouse approach.

Optimization of mapping processes and sustainable data quality

Why mapping is not a one-time project

Many companies treat data mapping as a project with a clear beginning and end. In practice, however, this is rarely the case. Systems change, new data sources are added, and the requirements for real-time processing, compliance, and reporting are constantly growing. That is why the continuous optimization of mapping processes is crucial for long-term success.

Potential for better results and less effort

Well-structured data mappings can be made more efficient through various measures:

Using smart tools not only saves time, but also reduces the risk of errors in data processing and deletion. At the same time, it improves the overview of the entire data flow—from the source to the target structure.

If you would like to delve deeper into the theoretical basics, you will find a good introduction at StudySmarter on the topic of data mapping.

Measurable improvement in data quality

Optimized data mapping has a direct impact on the quality of stored and processed data:

High data quality is the foundation for reliable analysis, accurate forecasts, and automated business processes, especially for data-driven companies.

Relevant tool tip

Many smaller companies underestimate the complexity of growing data volumes. Data automation tools, such as those presented in the AI text generator test, help you keep track of everything—even beyond pure content.

Don't forget automation and monitoring

An important step in the implementation of sustainable mapping processes is the automation of monitoring:

This creates a controlled, documented data process that can be maintained over many years – regardless of how much the IT environment changes.

Practical example: How data mapping works in IT and content management

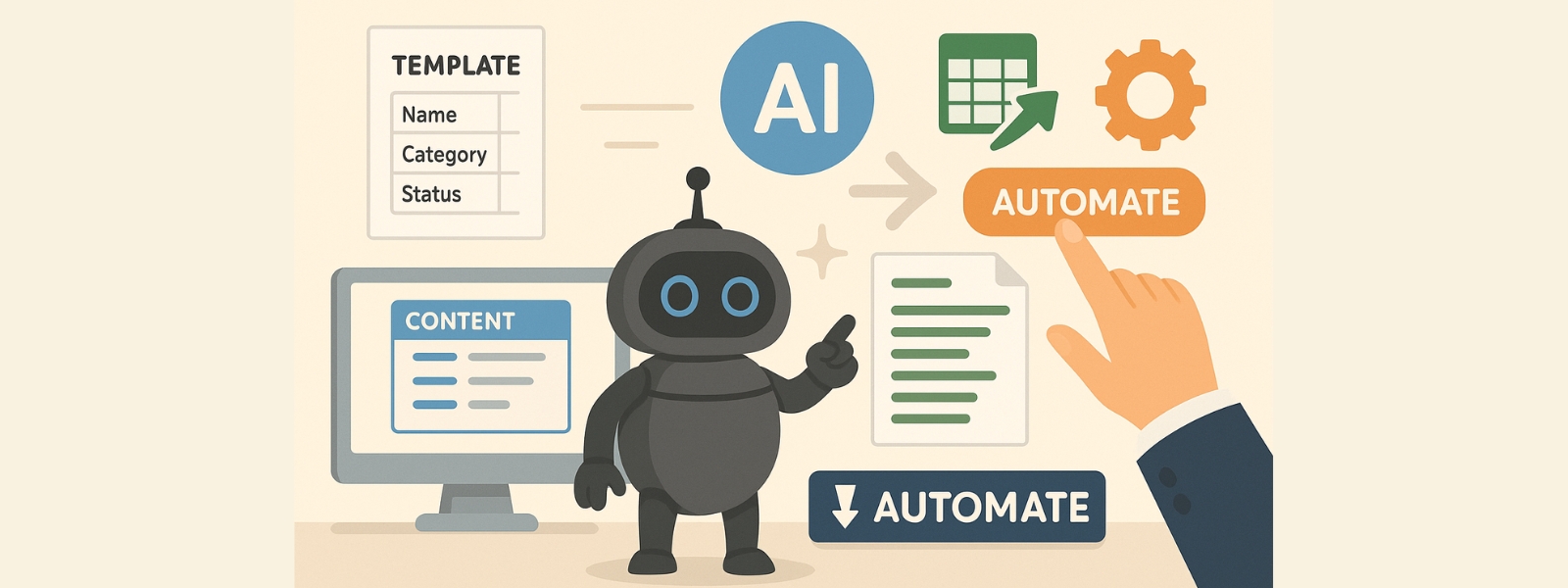

A realistic scenario: structured data integration for content

A medium-sized company wants to automatically generate content such as blog articles, newsletters, or industry news from an internal database—for example, from product data, CRM fields, or event lists. The challenge: These data sources are structured differently from a technical perspective, the formats are inconsistent, and the data structure is difficult to scale.

The solution: data mapping, which turns raw information into structured content. Every data assignment is documented, with clear rules for transformation, validation, and, if necessary, deletion. This translates raw data into usable text modules—without manual copy-paste or media breaks.

Implementation with uNaice ContentNaicer

This is where uNaice ContentNaicer comes in – an intelligent content system based on automated mapping. It combines structured data processing with AI-supported text generation. Implementation takes place in several steps:

This creates a seamless process—from raw information to publishable output, completely without manual editing effort.

If you would like to test the system, you can arrange a demo and experience uNaice ContentNaicer in your own use case.

Data protection, transparency, and traceability

Thanks to the complete documentation of the mapping rules, Content Naicer meets the highest standards of transparency and traceability. This not only allows content to be created efficiently, but also ensures compliance requirements are met and data deletions are systematically mapped.

For a more in-depth understanding of mapping and data protection, take a look at the article by BigID:

Data Mapping and Data Protection – BigID.

Conclusion: From data field to content – automated and scalable

An automated system such as Content Naicer is a decisive advantage, especially in dynamic environments where new content is needed on a regular basis. The combination of structured mapping, data integration, and intelligent text generation creates efficiency, consistency, and relevance – with minimal effort for the team.

Conclusion and recommendations for practical implementation

Why data mapping is a fundamental part of any digital strategy

Whether it's a CRM system, e-commerce backend, or internal editorial process, anyone who uses data must understand it, assign it correctly, and document it clearly. This is exactly what well-designed data mapping does—not only in IT, but throughout the entire organization.

The mapping of data fields, clean integration into existing processes, and integration into tools and automation are no longer optional extras—they are a necessary foundation for functioning systems, transparent processes, and scalable content.

The three most important recommendations at a glance

Recommended tool to conclude

If you want to delve deeper into the topic of mapping tools, Astera provides a good overview of commercial and freely available software solutions – from low-code platforms to ETL tools with integrated mapping logic.

Final thought: Content from data – automated, up-to-date, efficient

If you want to regularly translate structured data into content – whether for customer portals, internal communication, or your blog – it's worth taking a look at uNaice ContentNaicer. The system intelligently processes raw data, converts it automatically, and ensures clean, publication-ready content.

Get started now with a demo or test request – directly from your existing systems.